From Volume to Grade: The Color Pipeline I Need as a Colorist

Most LED volume footage I get into a grading suite looks fine on set and falls apart the moment I push it. The plates were already cooked. I can still make them look better — I'm just grading around damage that didn't have to be there.

This post is the handoff I want from VP teams so the image lands in color closer to a real scene than a rendered one.

What has to survive capture

Three things, in order:

Stop relationships. If a window is supposed to sit four stops over the interior, that ratio needs to mean something by the time I see it. If it doesn't, the shot stops behaving like light and starts behaving like a picture of light.

Highlight headroom. Rolloff should be progressive, not a wall. The instant a shoulder gets baked upstream, my room for negotiation in the grade goes with it.

Color separation at the top end. Bright regions should still hold chroma. Skies that go to a single hue, windows that collapse to a flat panel — those are tells that something display-referred touched the plate before the camera did.

Scene-referred in, scene-referred through

I don't care where the wall content came from. Captured HDR plates, Unreal renders, full CG environments — fine, all of it. What I care about is how it gets to me.

Wide-gamut. Scene-linear. Not display-baked. If those three are intact, the path from wall playback to final grade can stay coherent. If any of the three is gone, I'm reverse-engineering somebody's creative decisions before I get to make my own.

Unreal: render like you're handing scene data to post

Because that's what you're doing.

Render through Movie Render Queue. Export OpenEXR, 16-bit half float at minimum. Use OCIO/ACES-managed output, targeting a scene-linear wide-gamut interchange — ACEScg / linear AP1 is the obvious one. Nothing display-referred bakes into the plate: no Rec.709 output intent, no filmic tonemapper, no creative LUT burned in for the look of it.

People say "linear gamma" when they mean scene-linear transfer. The distinction matters when you're trying to write down what was actually exported, so write down what was actually exported.

The wall processor's job is not the colorist's job

Most of the confusion I see on set comes from one mistake: mixing the technical mapping and the creative look in the same step.

The wall processor's job is to take scene values and put them on panels that can only do so much luminance and gamut. That's a technical transform. The creative look — show identity, finishing personality, the rolloff that makes the thing feel like the thing — is mine. If the processor is doing the wall mapping cleanly and creatively neutral, I can shape final rolloff against the actual scene and the actual cut. If creative decisions are baked before capture, post inherits them permanently, and "permanently" is the word I want you to sit with.

Why SDR plates hurt

A display-referred SDR background arrives with a shoulder already in it and the highlights already compressed. Predictable consequences:

Windows and skies go plasticky the moment exposure moves. Skin against background never quite sits. Bright color separation collapses earlier than it should. Secondaries get unstable when look development starts pulling on them.

All of that is mitigable. Mitigation isn't preservation. I'd rather have the latitude than the rescue.

The on-set test that prevents most of this

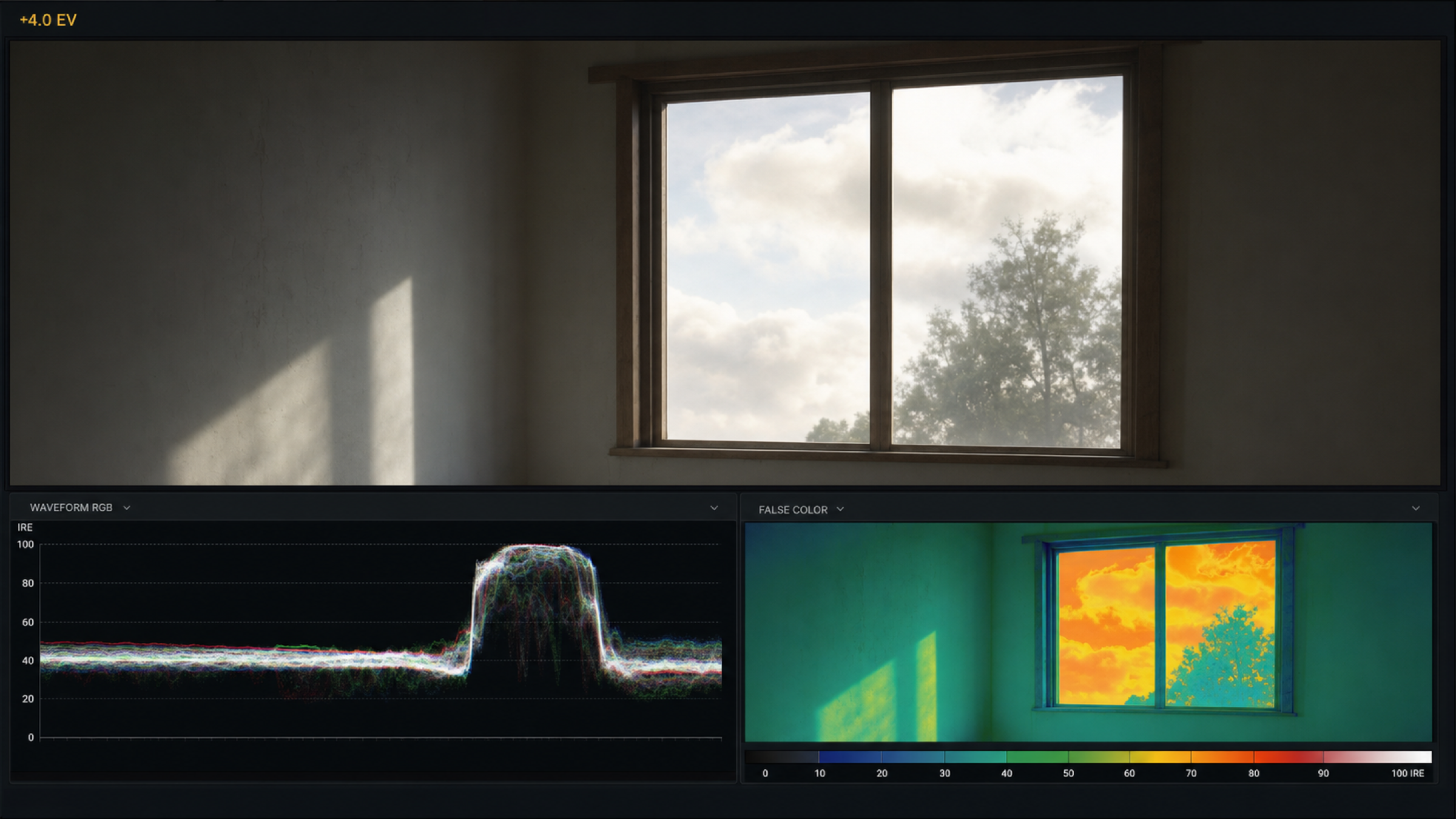

Before principal, build a plate with known stop-ratio targets — +2, +4, +6 references against a base. Run it through the camera path and the LUT stack you actually intend to use. Watch the waveform and false color while you push exposure.

If the highlights flatten the moment you ride the iris up, a display transform is firing somewhere it shouldn't. Find it then. Not in the grade.

What I want in the handoff

Files aren't the handoff. Files plus context are the handoff.

Plate source color space and transfer. The OCIO/ACES config version that was running on set. Camera color management path and any viewing LUTs. Wall mapping assumptions and constraints. The test captures showing how stop ratios actually behaved.

When that documentation isn't there, the first day in color is forensics. I'd rather spend it grading.

What this is actually for

Not technical purity. Faster finish, fewer compromises, and a foreground/background that integrates without me forcing it. Cleaner rolloff decisions made in context. Consistency across deliverables instead of per-shot rescue.

If the wall plate preserves scene relationships, the grade gets to be about story. If it doesn't, the grade is about damage control with a polite name on it.

Protect the scene-referred chain end to end and what reaches color behaves like photography. That's the whole post.